Primary Thoughts

Implementations are the edge

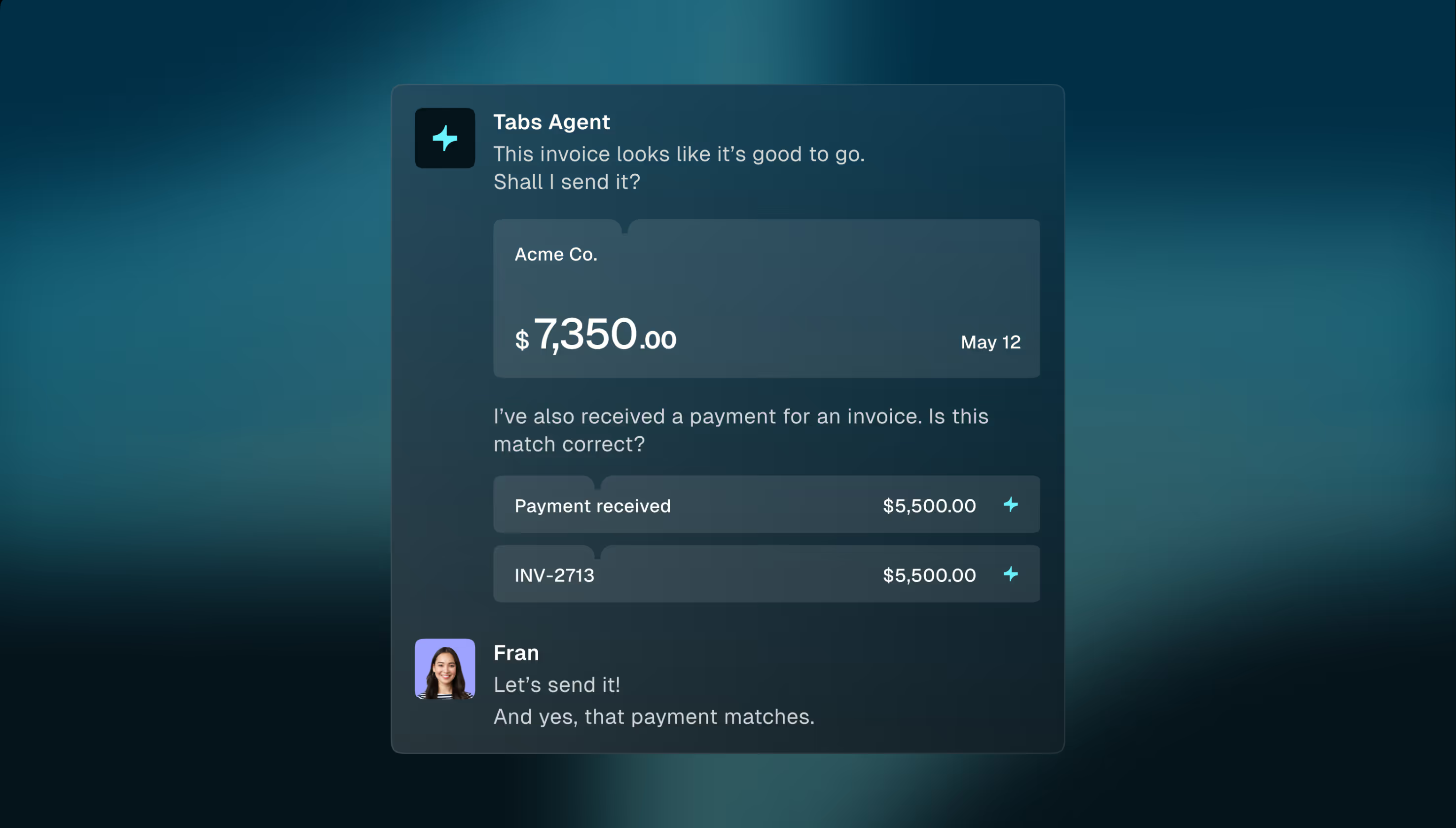

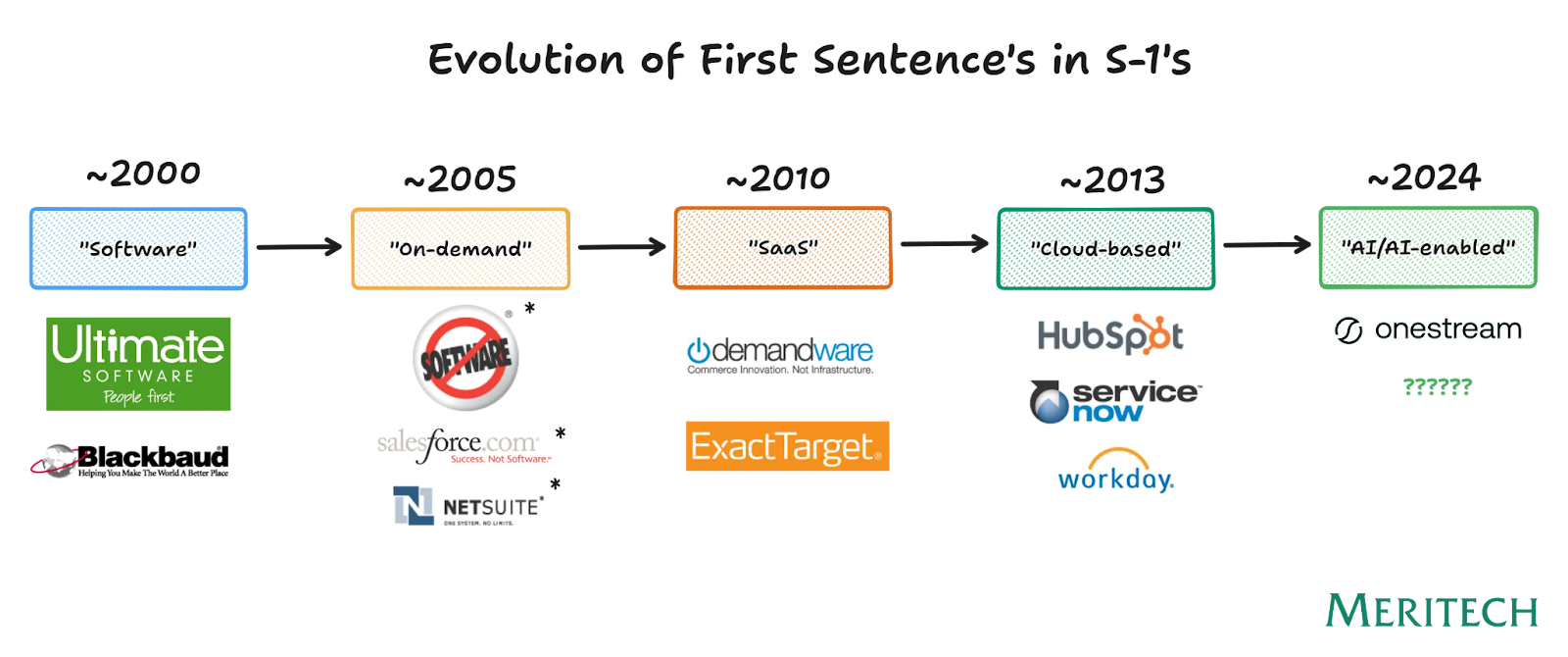

We are entering into a third era of enterprise software. Each era has seen a distinct shift of the onus of value delivery away from the customer and onto the vendor. We believe that value capture by AI-native software companies will hinge almost entirely on their ability to continuously deliver exceptional outcomes — making implementations and customer success mission critical.

We see the three eras of enterprise software as:

- On-prem (“Buy the House”) - large upfront investment, extremely high switching costs, ongoing monetization through professional services, in-sourcing of customization/implementation

- Cloud/SaaS (“Pay Rent”) - medium upfront investment, medium switching costs, ongoing monetization driven through expansion, outsourcing of implementations

- AI-native (“Hotel Rooms?”) - low cost of ownership, increasingly lower switching costs, ongoing monetization driven by value delivered to the business, high need for proper implementation

The On-Prem Era - “Buy the House”

In the on-prem era, enterprise software functioned much like buying a home outright: customers made a large, upfront license commitment long before any value was realized. Extremely high upfront cost of ownership and on-prem delivery meant that picking any system was a monumental decision. From there, software vendors were both able to and forced to extract ongoing value by charging through the nose to send a team onsite for every upgrade, patch, workflow change, or new integration.

As a result, professional services became a significant revenue line and a structural dependency. The knock on effect was that software had slow improvement cycles, deployments were brittle, and releasing new functionality was expensive and logistically complex. Switching costs were so high that “Customer Success” did not exist as we know it today; rather post-sale engagement centered on “Account Managers”, whose remit was increasing contract value rather than ensuring product outcomes. The guiding principle of the on-prem era was stability and control, not adaptability or continuous value realization.

The Cloud/SaaS Era - “Sign the Lease”

The transition to cloud introduced a fundamentally different software operating model. Instead of owning software outright, customers switched to recurring subscriptions akin to paying rent. Delivery in cloud environments made software improvements effectively free, with vendors shipping updates in the form of new features and functionality directly to customers. Professional services largely moved away from being a consistent, recurring revenue stream (that’s paying for the software instead) and more limited in scope to the “one time” upfront customization and onboarding work needed to adjust an “out of the box” solution to an enterprise customer.

The more one-time nature of the implementation meant that the scaled platforms largely outsourced this work — creating one of the largest services markets in enterprise IT. Global systems integrators (GSIs) emerged as a category – a $550B+ services ecosystem to support SaaS adoption. GSIs today represent nearly ~9% of all global IT spend.

In this era, the growth path for software companies in this era shifted to license / seat growth within accounts. NRR became the north star metric and therefore the optimization of expansion, renewals, and upgrades. This lowered switching costs (relative to the on-prem era) and birthed the “Customer Success” function to professionalize the processes behind account growth.

SaaS improved time-to-market and made product improvements effectively free. The lower cost of ownership meant the average number of SaaS applications per company grew from 8 in 2015 to 130 in 2022. For complex enterprises, value realization still depended on services-heavy, bespoke implementation work. This compounded with complexity as tech stacks broadened through the “unbundling” era of software. In this era, how the product was configured, integrated, and rolled out often determined actual ROI.

Entering the AI-Native Era - “Rent a Room”

In this emerging era, “software” is increasingly shifting to usage/value based models where customers effectively “pay as you go” for the value that they’re consuming.

The risk of a “bad” implementation increases exponentially because:

- Revenue starts at consumption - usage/outcome-based pricing moves revenue recognition and ACV growth to consumption, making implementations the primary bottleneck

- Probabilistic nature of AI requires more testing - non-deterministic output makes continuous evals and tuning critical, and model updates mean today’s functionality cannot be taken for granted tomorrow

- Implementations become a moving target - the combo of rapidly evolving underlying models and customer-side inputs mean implementations are stale almost immediately. “Go-live” becomes the start, not the end, of implementation. In the SaaS era, it was widely accepted that “Phase II” of an implementation oftentimes never happened as customers were so fixated on the exercise of “going live” that they more often than not lost steam on anything further. AI-era buyers will demand more.

- Software adapts to customers, not the other way around - the SaaS era provided opinionated solutions that were largely “take it or leave it”. AI-native solutions need to capture the nuances of tacit knowledge, tone, and culture of the end customer in a way that makes discovery exponentially more complex.

As a result, AI-native app companies today are each reinventing the implementation playbook from scratch. There is no “system of record” that captures customer level configurations and playbooks in a repeatable way. Implementations are increasingly a product problem (in fact – we are seeing an increase in implementation orgs structurally rolling up to the CTO). Companies are throwing bodies at the problem – and they’re expensive ones (in the form of “FDEs” too):

The last generation of customer success software (i.e. Gainsight, Catalyst) was largely a disappointment. The main challenges they faced were 1) mediocre ROI and 2) CS leaders had thin budgets and 3) existing solutions were largely reactive to what happened (oftentimes too late) vs. proactively recommending intervention and 4) data ingestion was massively painful

This is now changing. The AI-era is blurring the line between pre and post sales as we move to a continuous delivery model. In this world, the implementation org is no longer a cost center, they are becoming a core revenue driver.

Early observations in the market suggest:

- Persistently wide CARR > ARR gaps – Anecdotally we are regularly seeing CARR 3-5x higher than live ARR as many AI apps providers enter “land grab mode in an aggressive market”. Strong market pull is no longer the hallmark of PMF given top down AI experimental mandates. Retention is the new bar for PMF. This is shifting implementations from “expensive” problem to board level “hair on fire” priority

- Structurally lower gross margins – 70-80% SaaS margins are a thing of the past. Up to 56% of SaaS vendors now have variable pricing. With unit costs scaling with model usage, poorly tuned systems will become more expensive to deliver (as you have to re-run queries) and lead to weaker gross margins.

- “Implementing” is a continuous state – the underlying models and products are evolving so quickly that implementations are basically out of date as soon as they are complete. As vendors and customers alike are adopting agentic workflows in real time, there needs to be constant communication and adjusting (vs. a customer success team parachuting in right before the contract expires!). Again, this is a stark contrast to the SaaS era, where both buyers and customers became complacent with stale implementations until they reached the point of churn risk.

- GTM teams need new metrics around customer health – GTM teams will rely on new leading indicators of customer health in addition to the tried and true NRR metric. NRR is a lagging indicator of customer health and AI-native companies do not have the luxury to wait it out. Forward-thinking leaders are adopting new metrics to hold themselves accountable to customer value (e.g. Outcome Metrics 1.0) and we expect some of these to become the new normal.

- The right talent to do this is way more expensive – “FDEs” of every kind are en vogue – demand has grown 12x in the past year. Given the product depth, subject matter expertise, and oftentimes technical needs to get these implementations right, this talent is a much more expensive one — roughly 1.5-2x the cost of a typical CS associate. Historically a good implementation manager could manage 8-10 projects at a time; the deeply embedded model is scaling that way down. That’s changing too with 50% more of AI-native GTM teams in post-sales roles (vs. SaaS peers). Add this up and the total cost of implementations skyrockets.

The bottom line is that the onus of ownership is shifting from the customer (in the pre-SaaS world) to now almost entirely on the vendor. Getting in the door is now easy, staying relevant towards actually delivering value is much more difficult.

So What Does this Mean?

For Enterprise AI Apps, the implementation layer is emerging as the true battleground for category leadership. What endures is a vendor’s ability to implement, align, and maintain AI systems in live environments.

So the questions for a company in this space become:

- Is this a point in time problem? It’s still early days and most of the app layer is in “land grab” mode. At a more steady state of market penetration and product maturity, does the severity of the problem change?

- Can it be productized? Each category and even each end customer may require a bit of a different approach. Is there a set of collective primitives that will emerge that allow for repeatability (vs. just becoming a modern systems integrator)?

- Will implementations be “too” mission critical? Is a good implementation too much of the “secret sauce” to the point where each AI-native app company will want to own the process themselves? Anecdotally – we don’t think so. From what we’ve seen, although implementations are a huge problem, companies would rather dedicate limited resources towards core product development.

AI-native companies need to cross a new kind of chasm. The willingness to try is largely there, the willingness to stay is yet to be seen. We believe this dislocation creates a unique “why now” for a new “system of record” that is centered on the existing customer and enables continuous, ongoing implementations to emerge.

There have been a handful of new companies that have emerged to tackle this problem and automate implementations leveraging AI. There’s three main approaches:

- Arm the SIs – companies like Auctor builds tools to help traditional SIs deliver better implementations.

- Replace the SIs – these are tech-enabled services businesses that are focused on replacing the legacy consultants that are brought in to implement heavyweight software systems. Tessera focuses on ERP migrations while Echelon centers around ServiceNow work.

- Augment (and over time automate) internal delivery teams – systems like Axiamatic serve companies going through transformations while Genera supports AI-native companies who have hefty implementations processes.

As last mile delivery of software — in particular agentic software — becomes increasingly critical we know that the “FDE” role is only going to continue to grow in popularity. Implementations are becoming a front office problem and there’s a massive opportunity to help support this delivery problem as we shift into this new era of software consumption.

.png)

Tokaido Health raises $25M

Healthcare costs for employers continue to skyrocket with no end in sight. Here in the US, we have put ourselves in an untenable position with very few solutions in the market that actually bend the curve. Look at where the cost is growing and the picture is hard to miss: pharmacy is the fastest-growing line item in American healthcare, and the overwhelming majority of that growth has nothing to do with new science.

Instead, it’s about misaligned incentives and a lack of understanding. More often than not, the party choosing a patient’s medication is not thinking about the site of care, the drug’s price, or the downstream costs. This lack of understanding means billions of plan sponsor dollars get wasted every year.

Ben Ryugo, CEO & Founder, spent years at Included Health, where he sat inside one of the most operationally complex care navigation businesses in the world and saw these misaligned incentives around medication from every angle – payer, employer, member, and provider. He lived and breathed this problem on a daily basis, without a clear solution. Tokaido Health is changing that.

Tokaido Health is an AI-native medication steerage platform anchored in behavioral economics. In its simplest form, the company identifies same-or-better medications that cost less, and pairs those recommendations with incentives that compel members to actually make the switch. They deliver a clean and simple value prop to employers, while also delivering a better experience to patients.

AI now enables Tokadio to do this work at member-level granularity. Unlike navigation platforms of the past, this is not about building a static rules engine bolted on top of a benefits portal. To properly navigate a patient through any medical journey, the platform has to reason across clinical context, formulary specifics, member preference, geography, and incentive structure across millions of decisions.

To start, Tokaido delivers four motions on a single platform that have historically lived across separate vendors: site-of-care steerage, biosimilar substitution, formulary optimization, and polypharmacy reconciliation. The team sees this as just the beginning. The roadmap from here keeps expanding the surface area of decisions Tokaido can make on a member's behalf, and there is no shortage of places where misaligned incentives are pushing members toward the wrong choice.

Critically, Tokaido layers on top of the existing PBM and benefits stack. No PBM swap, no plan design overhaul, no fight with the incumbent ecosystem. The team does the concierge work of moving the member from the higher-cost option to the lower-cost one. The plan wins, the member wins, and the independent specialty providers absorbing biosimilar volume win.

We are thrilled to partner with Ben, Gus, Andrew, Samir and the rest of the Tokaido Health team as they come out of stealth and announce their $25M financing led by Norwest, Primary, Next Ventures, Constellation, Scrub Capital, and others.

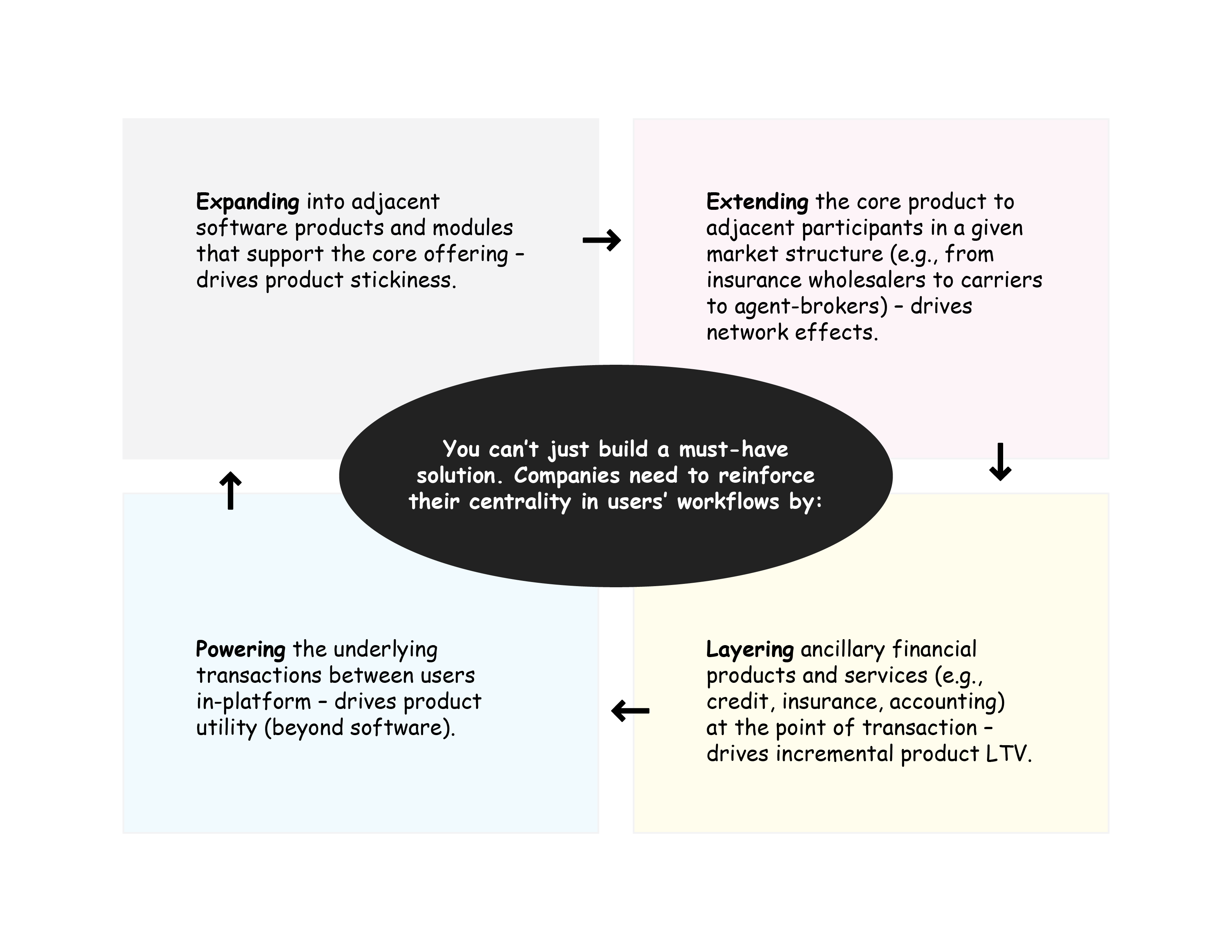

Beyond Subscription: The business model case for AI-Native vertical EHRs

In Part 1 of this series, we laid out why we believe AI-native, vertical EHRs could represent one of the more interesting bets in healthcare software right now. We described the structural dynamics that make certain specialties worth targeting: provider independence, workflow complexity, administrative burden, and competitive fragmentation.

We closed with a question we've been spending time on since: does the EHR market need business model innovation to be truly venture scale?

The “SaaSpocalypse” has made this question hard to ignore. If the terminal value of the world's largest software companies is being written down, verticalized ambulatory EHR software is not immune.

At its core, the "SaaSpocalypse" debate is a structural one: when AI can automate the workflows per-seat software was built to support, does the subscription model hold? For vertical EHRs, the question cuts even deeper. These platforms sit on top of enormous clinical context and currently do almost nothing with it. AI doesn't just put pressure on the subscription model. It opens the door to a better one, priced on consumption rather than seats.

That pressure has direct implications for the incumbents. The last wave of EHR PE (Thoma Bravo's acquisition of NextGen, Warburg's backing of ModMed, Francisco Partners' ownership of AdvancedMD) was underwritten on a familiar thesis: sticky subscriptions, low churn, customers too entrenched to migrate. If AI-native entrants show up with better software at a cheaper price and a business model that makes money elsewhere, how much of that stickiness really holds?

The EHR business model status quo

Most vertical EHRs today charge per provider, per seat, or as a flat monthly fee. It's a clean model: predictable, easy to price, easy to negotiate. But we believe it also dramatically underprices the value that an EHR, sitting at the center of a practice's workflow, is actually positioned to deliver.

EHRs capture an immense amount of data: every prescription decision, every diagnosis code, every prior auth submission, every referral, every lab order, every payer interaction, every billing event. And yet they do almost nothing with it, which is reflected in their pricing. Ambulatory EHRs capture 1-2% of the revenue a practice generates. The data live in the EHR, but the actions and labor happen elsewhere.

A pure subscription specialty EHR runs $150 to $1,500 per provider per month depending on specialty and practice size. A mid-sized gastroenterology practice with 10-15 providers might net a $60-100K ACV for a company like ModMed, before you layer in any RCM take rate. Not nothing, but modest given how central the software is to the practice. Even with RCM layered in, CAC is high and implementation timelines are long, which is a big part of why this category has been hard to build at venture pace. This pressure has pushed operators in this category to rethink how an EHR should actually make money.

Moving past SaaS in EHRs

Expanding beyond pure SaaS isn't a new idea. Flatiron Health, athenaHealth, and Practice Fusion have all tried it, each with a different monetization approach, and each with varying degrees of success.

Flatiron Health’s vision was that pairing deep specialty ontology with a data asset would deliver outsized value. Flatiron bought an oncology EHR, Altos Solutions, that enabled them to structure real-world clinical data that could be sold to pharma for clinical trial optimization, drug development R&D, and regulatory support. They translated messy, unstructured notes into curated, analyzable datasets. The EHR was the entry point; the curated oncology dataset was the business.

Roche acquired Flatiron in 2018 for $1.9B, but the acquisition ultimately undermined the very business model that justified the price. Flatiron’s value rested on being a neutral data partner to all of pharma, and the moment Roche owned them, competitors became wary of working with them. Independent governance didn’t fix that, and Roche has since sold off parts of the Flatiron business at a fraction of the sale price.

While this data platform approach could theoretically work in other specialty markets, the post-acquisition structural problem is the central question to resolve.

Athena built its business around a different insight: if you process the revenue cycle for a practice, you should share in the outcomes, not just charge for the software. Although athena is now privately held by Bain Capital and Hellman & Friedman, its public market history tells a clarifying story. The business launched in 2000 not as an EHR but as a cloud-based revenue cycle company. In fact, their EHR product didn't come until 2006. When you look at the public filings, the revenue composition reflects that origin: the overwhelming majority of revenue came from RCM and a percentage of collections, not software subscriptions. Athena is widely categorized as an EHR company, but the business model is fundamentally different from a pure play SaaS company. The EHR was the wedge, while RCM was the business. That's a large part of why $17B was a reasonable price for a company that was nominally "just an EHR."

It is key to note that while athena pioneered this model, most large specialty EHRs now bundle RCM services very tightly with their EHR product including ModMed, Nextech, eClinicalWorks and others. So even in a world of SaaS disruption, these ambulatory EHRs have more revenue durability than most people believe.

Practice Fusion took the most aggressive swing, offering their EHR for free. At its peak, it was one of the largest EHR networks in the country by provider count — valued at around $1.5B in 2016 — built entirely on a free model. Revenue came primarily from advertising, de-identified data sales, and clinical decision support. The latter is where the company got into trouble, after accepting payment from a pharmaceutical company in exchange for encouraging physicians to increase prescriptions for extended-release opioids. They ultimately settled with the DOJ for $145M and then Allscripts acquired the company in 2018 for $100M. Legal issues aside, the underlying hypothesis and vision was compelling: when you have access to a broad clinical network, the monetization surface is substantially larger than a seat license. We’ve seen a similar thesis play out more successfully with Doximity and now OpenEvidence.

So are there opportunities for innovation?

The through-line across these examples is that the business model follows the product. In Part 1, we laid out three archetypes for companies looking to unseat the incumbents.

- Action layers that sit on top of EHRs. This is where most venture dollars have flowed to date. Automate workflows, integrate with the existing EHR, prove ROI, expand. The catch is that you're building on a platform you don't own, and the incumbent can pull the rug whenever they decide to

- Modern systems of record. The clean-sheet rebuild: better data models, modern architecture, native automation from day one. In theory, owning the system of record unlocks all the same business model optionality as a full vertical OS, but the timeline to get there is brutal

- Full vertical operating systems. This is where the business model fully unlocks. These platforms combine the system of record with end-to-end workflow execution and layer in revenue streams across RCM, prior auth, specialty pharma routing, diagnostics, and data. Companies like Ease Health appear to be taking this approach. The EHR subscription isn’t the prize here, but rather a way to lock in the customer and capture all your value elsewhere

Of the three, the full vertical OS is the one we keep coming back to. It's the only archetype that holds up across the dimensions we care most about: AI leverage, long-term defensibility, and TAM expansion.

AI-driven consumption business models

The historical examples point to the same conclusion: stand-alone seat-based pricing is not the ideal business model for EHRs. Instead, Flatiron, athena, and Practice Fusion each found their way to a consumption-driven business model. Most notable is athena given they structurally shifted how the entire ambulatory EHR market operated and pushed companies deeper into the world of RCM.

So does AI create a new structural business model shift in this market?

We believe it does across two dimensions: 1. Improving the economics of already existing consumption models, 2. Enabling new consumption models to more easily emerge.

Improving existing consumption models:

RCM

RCM is where AI has the clearest path to value in this stack. The AI RCM market has already attracted roughly $1B in cumulative venture funding, with companies like Akasa, Cohere Health, and Adonis growing aggressively. The story is simple: AI reduces human labor across coding, billing, denial management, and appeals while improving collection rates. Today, athena takes 4-8% of billings for its services, and with AI properly implemented, its profit margins should increase in a meaningful way.

In an ambulatory market where the dominant EHR doesn't provide RCM services, an AI-native competitor can offer a better EHR and undercut the standalone RCM vendor at the same time. The combined product is stronger than either piece sold on its own. But in markets where the incumbent EHR already bundles RCM, that wedge is closed, and an AI-native challenger has to find other consumption streams to compete on.

Enabling new consumption models:

Practice management

Many EHRs already layer in practice management capabilities, yet none have taken the step to truly “own” the administrative function and labor associated with it. We believe that’s where the next wave of value will be captured, and it has two dimensions: the labor that keeps the practice running and the analytics that grow it.

On the labor side, AI agents are already automating intake, scheduling, insurance eligibility, and other workflows that today are human-intensive and largely decoupled from care delivery. But the shift is not just in automation, it's in how these workflows are monetized. SaaS monetization has followed a clear arc: first, companies charged for software. Then the smartest companies realized they could charge for payments. Now, the next frontier is charging for labor. This is not feature expansion. It's a structural shift in how software companies will capture value. Rather than selling a seat license, companies are increasingly monetizing on work completed: per intake processed, per appointment scheduled, per eligibility check verified. In many cases, they are effectively becoming the staffing layer themselves, with a pricing model that directly maps to labor displacement and throughput.

On the intelligence side, the same system that captures every marketing touch, scheduling event, prescribing decision, and claim outcome is uniquely positioned to turn that exhaust into operational insight: CAC by referral source, LTV per patient, case acceptance rates by provider, the unit economics of an MA hire. Today, most practices either do this in spreadsheets or fly blind. Incumbent EHRs surface dashboards that technically exist but rarely answer the questions a practice owner actually needs to run their business. An AI-native system of record closes that loop natively, which is what it really means to run the practice and not just record it.

Folding staffing and analytics into the EHR is not just product expansion. It's the logical end state of this monetization shift. The EHR that moves first to own both layers will not just be a system of record. It will be the system that actually runs the practice end-to-end.

Clinical decisioning & workflows

On the clinical side, incumbents have conspicuously ignored assisting in clinical decisioning and the workflows that sit alongside these decisions. Instead, companies like Navina aggregate data to present a full patient picture at the point of care, while others like OpenEvidence help ensure that providers make the best decision possible at the point of care. Yet, a lot of these insights are driven by core data that lives within an EHR.

It’s not a large leap to imagine that an EHR could instead own this revenue stream directly, and then orchestrate the administrative and agentic workflows that flow from each clinical decision. The monetization doesn’t have to look like a seat license either. Doximity and OpenEvidence have both shown that alternative business models, including advertising and pharma sponsorship, can work at scale in healthcare software.

Pharmaceutical services

The specialty pharma opportunity may be the most overlooked opportunity by EHRs. Startups and non-EHR incumbents have already built consumption-driven businesses around it, yet most EHR companies have yet to attack this part of the market.

Across high drug-spend specialties (oncology, ophthalmology, rheumatology, dermatology, gastroenterology), significant dollars flow between a physician's prescribing decision and the moment a drug is dispensed. An EHR with full clinical context is positioned to own that entire chain: prior auth, hub enrollment, pharmacy routing, and group purchasing.

Companies like Tandem and Latent have already grown aggressively by automating prior auth, acting as non-dispensing pharmacies, and monetizing through pharmaceutical hub services. To do this, they rely heavily on existing EHR workflows to drive their growth. A new EHR built from scratch has no reason to cede that position. In addition to this workflow, there is also a drug GPO opportunity. Cencora and McKesson have already demonstrated that tying an EHR to a GPO is legally viable, yet no one else has followed. An AI-native EHR that automates the administrative overhead and takes a slice of drug spend would be a fundamentally different kind of business.

How the TAM expands

Not all specialties are created equal for this model of vertically integrating practice management, RCM, clinical decisioning, and pharma workflows.

We believe the specialties most worth targeting have high drug spend per patient, high administrative intensity relative to patient volume, and clear clinical AI use cases. The more of those boxes a specialty checks, the more the full vertical OS model makes sense over a pure subscription play. To help bring this to life, below is an illustrative TAM for gastroenterology. Worth flagging that the clinical AI aspect is the most uncertain piece here, given ongoing unknowns around how this care should be delivered and how clinical decisioning will be monetized.

In this framing, pricing the EHR cheaply isn't a bug, it’s a feature that helps drive a more aggressive customer acquisition strategy.

So are ambulatory EHRs a venture opportunity?

When you look at the above TAM, the short answer is yes. The TAMs aren’t massive, but they’re large enough with enough upside to make this category genuinely interesting.

There are still a lot of executional questions to answer around product sequencing. Do you need to build all of this at once? Do you start with a unique wedge? Some combination of a large product build, plus a unique light-weight wedge? There's no universal answer. The answer will heavily be driven by the specialty, the customer, and what you can credibly own from day one.

If you're a provider or operator who sees your practice in any of what we've described above, we want to hear from you. And if you're building or interested in building in this space, we'd love to talk.

- Email: sam@primary.vc & hannah@primary.vc

- LinkedIn: Sam Toole & Hannah McQuaid

%20(1).png)

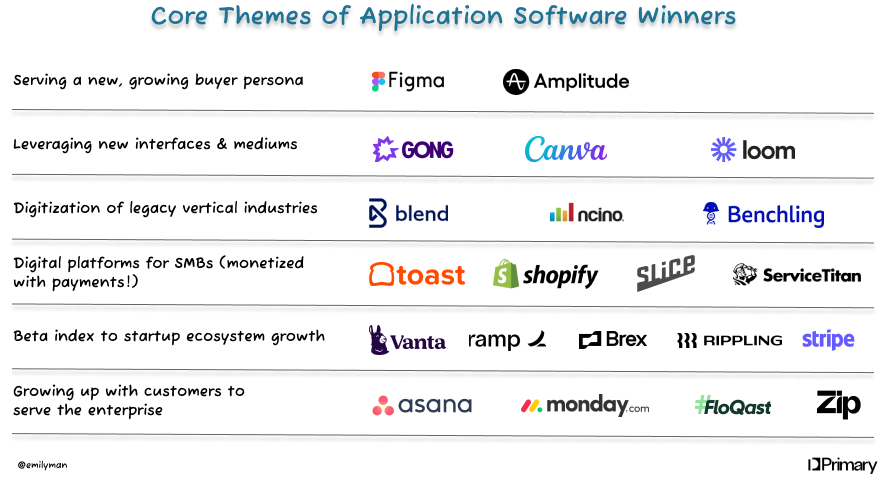

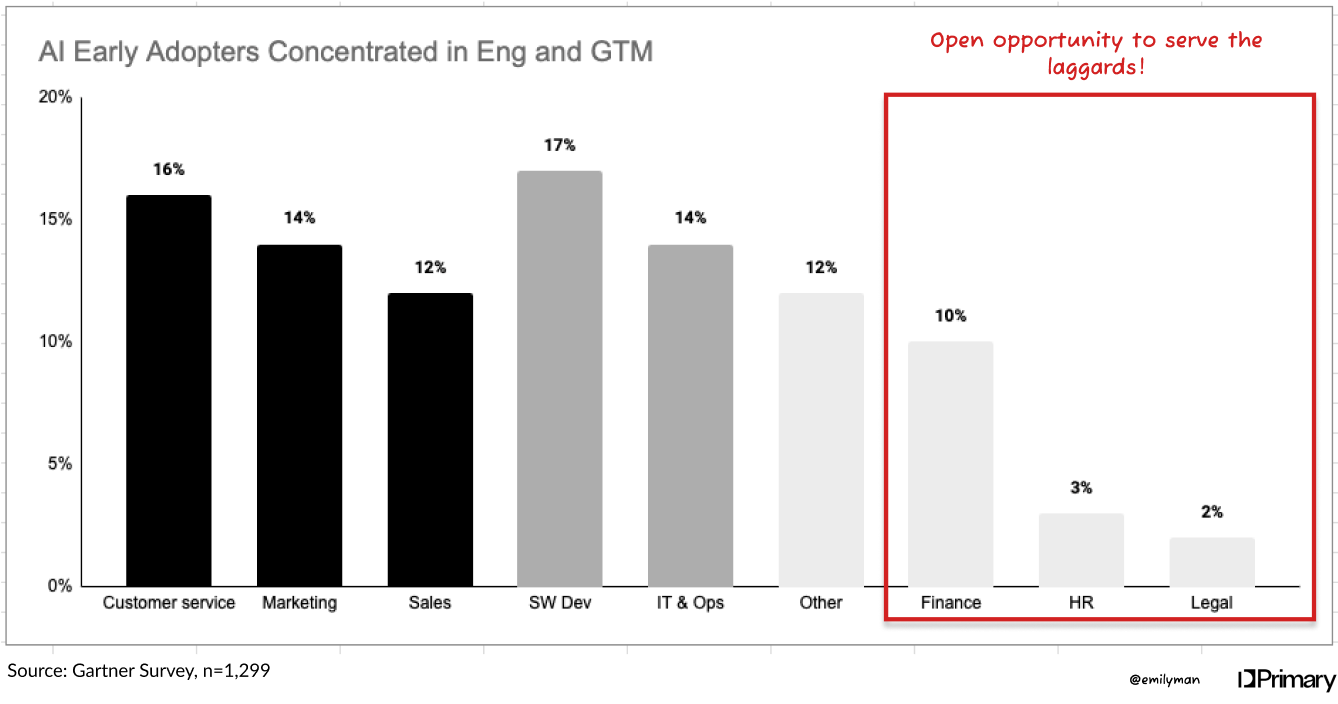

Our 2026 Request for Startups

AI is moving at warpspeed where entire categories are being created and destroyed seemingly overnight. Agents are systematically translating the “knowledge” part of knowledge work into systems and code. For the first time, many locked up $1T+ profit pools are in play.

There’s four meta-trends and tailwinds that we believe will create opportunities for value creation. For each, we’ve described some examples (not exhaustive!) of the types of opportunities that we’re actively looking to invest in.

.png)

Extreme volatility is the new normal

Between covid shutdowns, tariffs, and now AI uncertainty, our economy has been enduring massive shock after massive shock. And if there’s anything the markets hate the most, it’s uncertainty. Add to that new unpredictable costs in token consumption, power usage, and more means it’s just harder and harder to control risks where it matters most. We believe the ability to manage and hedge risks are going to become more important than ever in a rapidly changing world.

Planning software for a usage-based world

SaaS isn’t dead but seat based pricing most definitely is. Up and down the PnL everything that was once “fixed” is becoming variable. Revenue is shifting to be usage or consumption based. On the costs side, AI spend is the fastest-growing and least understood line item on the P&L. We’re still in the “tokenmaxxing” era but play out the tape and could token/inference costs soon overtake G&A as a line item? Headcount planning, budgeting cycles, and even (human) employment offers are going to fundamentally reorient to include the costs of AI and organizations are going to need better ways to understand and plan for their organizations.

AI-native catastrophic risk simulation software

Tail risks are happening more frequently and they’re breaking risk models. We just hit our sixth consecutive year where natural catastrophe losses were $100B+. What most of the traditional actuarial models miss is that they price risks in isolation and rely heavily on backwards looking loss data. The insurance industry needs new modelling software to help them better understand how various risks cascade into one another. Can world models or new AI-architecture help fill that gap?

New ways to isolate emerging factors

It’s starting to feel absurd that the markets close…since the world definitely doesn’t. It’s clear that the markets are clamoring for new ways to get financial exposure to the changes in the world as they happen in real time. Prediction markets are now at $20B+ in volume. The Iran crisis causes oil perps to surge to $48B on hyperliquid over a weekend. We believe the markets are hungry for new ways to get or maintain new exposures and there will be opportunities for new ETFs and trading venues to emerge.

Agents are the new ICP

BCG estimates the agent market will grow at 45% CAGR, and that feels like a tragic underestimate. Smart, autonomous agents are here and they’re quickly being adopted across consumer and business operations and they search, discover, understand, and choose in fundamentally different ways. Our software and our internet were both designed by humans for humans not agents. Solutions here will bridge that gap.

The communication layer for agentic teams

If you think alert fatigue was bad now… imagine what happens when you add human-agent and agent-agent communications into every business workflow. When half the workforce becomes agents, there’s going to need to be a new way to manage and communicate (and it’s definitely not email + chat!) both inter and intra-organizationally.

Implementations need a new system of record

Implementations were already the pain in the ass that killed many a software sale. In the AI-era, a botched implementation means botched outputs which means botched outcomes. And it’s not one and done either. Each deployment needs to continue to iterate and adapt to evolve in tandem with the needs of the customer. The CARR to ARR gap for every AI-Native software company continues to widen and churn for poor ROI tools is also on the rise. This gap can’t be filled with FDEs forever. There’s a role for a new system of record to emerge that starts at implementation and moves to be the source of truth for customer-specific agent deployments.

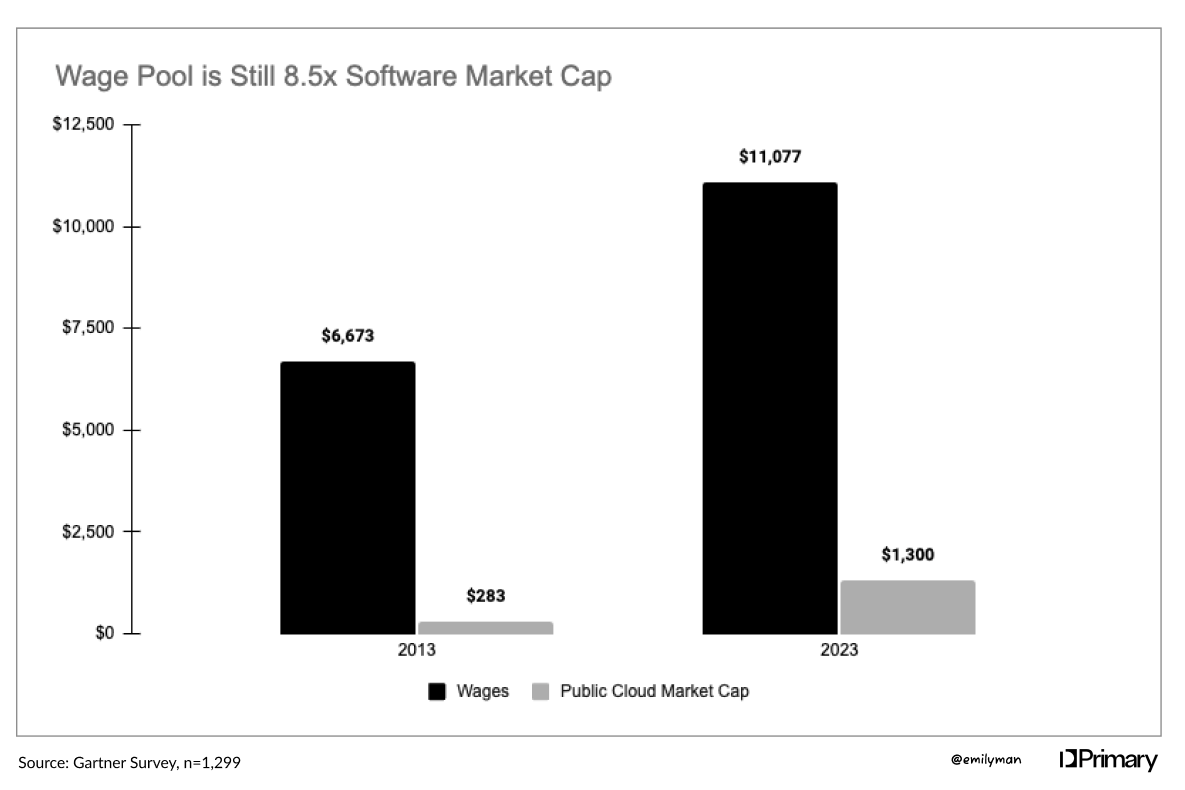

Solving structural labor shortages

75% of accountants are retirement age. Insurance is facing an upcoming gap of 400K jobs. The average wealth advisor is 51 years old. AI is eating into the $6T of white-collar payroll spend but it’s not all a replacement story. And this doesn’t even consider economics around the world with rapidly aging populations. There are many structural labor gaps emerging, particularly across “old-school” industries where AI can serve as the structural unlock.

No more phone trees please

The voice context on a call is infinitely richer than a chatbox and the technology is roaring past the uncanny valley. We’ve only just scratched the surface of applications of voice technology and what that means for democratizing the “white glove service” that is associated with always being just a call away. Advisory calls, deal closings, complaints & claims – all run on legacy processes, generate zero structured data, and are staffed by a constantly changing roster. We’re looking for the solutions that aren’t afraid to get deep — unlocking vertical specific or even customer specific voice applications across industries.

AI accounting solutions for every industry

In the $650B accounting services market, we believe that the next iteration of AI-accounting will be deeply verticalized. Property accountants spend hours reconciling CAM charges, construction firms track detailed percentage-of-completion and project based costs, and healthcare providers juggling payer mix accounting, while retailers live in tangled webs of deductions, promotions, and rebates. Every vertical with complex value chains, ownership structures, and billing will need their own vertical specific accounting solution (and dare we say…system of record?).

Finance needs new plumbing

The GENIUS Act and MiCA mean for the first time ever we’re starting to have clarity around around stablecoins and digital assets. At the same time, the markets are more global and 24/7 than ever. Total stablecoin volume has surpassed $1.8T up from $668B a year ago. We’ve crossed the chasm regarding institutional interest and comfort in stables, blockchain, and tokenization but most traditional players are held back by 40+ year old infrastructure. The financial stack is being rewritten from the ground up in real time – but there’s a real need to bridge the gap between old and new.

‘Tokenize it’: the US equities edition

There are 185M stock market participants in the US. The global demand is easily 10x that. Foreign holdings of US securities has grown 10x in the last 20 years but this is still largely out of the hands of retail traders. The demand is there. But for billions of people in emerging markets — where crypto adoption is high while equity participation is <10% — the settlement, custody, and compliance infrastructure to actually buy a share of Apple doesn't exist. Add to that private equity firms, private companies, or other real world assets which are going to be opened up to the world.

Back office infrastructure supporting a T+0 world

Equity settlement has gone from T+5 to T+1 over the last 30 years and now, T+0 (i.e. instant settlement) is looking like it’s on the horizon. Faster settlement reduces risks and frictions in the system. The issue: the back office isn’t ready. Clearing, reconciliation, corporate actions, proxy reporting – all still run on batch processes, manual workflows, and systems built decades ago. Broadridge, founded in 1962, alone processes $10T in daily transactions and proxies for 70%+ of US public companies… in the new world, that’s not going to cut it

AI-Native + stablecoin-native treasury management system

Today’s treasury management and ERP systems for a fiat focused world. Focusing on helping customers manage and move their own cash around dozens of bank accounts across the world. However, companies are already leveraging stablecoins for inter-company transfers, sweeping excess cash into tokenized money markets, and paying contractors across borders in real time. Nobody has built the connective tissue for a business running over multiple sets of rails while the last generation of software in this space is still extremely clunky, manual, and expensive. There’s an AI-Native + stablecoin-native solution to be built.

Agentic workflows that backdoor into your new insurance core

Insurers have faced a lose-lose for years: keep creaking legacy cores or endure a ~10 year replacement cycle. When faced with open heart surgery, inertia always won. Now AI agents can instantly automate high-impact workflows that meaningfully drive down expense & loss ratios. Think submission processing, claims intake, and broker portals. We believe there’s an opportunity to start services-as-software that tackles those operational workflows and collects the context and data along the way to earn the way into becoming first a shadow core and then the real core.

What Are We Looking For?

Primary leads pre-seed and seed rounds with $1-10M checks. We back founders who are building from conviction, not consensus.

- Team over market timing -- at seed, we’re betting on you and your ability to founders and their ability to navigate twists and turns as the future unfolds. We want builders who are customer-obsessed, product-driven, and AI-native. Slope over intercept.

- Sell the outcome, not the platform -- the best companies in this cycle will be measured by what they deliver, not what they enable. FDEs, not sales engineers.

- Paths to compounding moats: This era of AI will be defined by last mile delivery. It’s getting easier to build but that does not mean it’s easier to figure out “what” to build. We believe that the core tenants that lead to structural moats ring true – capturing proprietary data sets, network effects, deep applied industry knowledge.

If this is you and you’re building against one of the trends above, we want to know you. Here’s where you can find us.

- Email: fintech@primary.vc

- X: @emily__man & @nwdaley

- LinkedIn: Emily Man & Nick Daley

Eos: identity security for the agentic era

Today, we’re excited to announce our investment in Eos, and AppViewX’s acquisition of Eos. To think this has all happened in just the last nine or so months is staggering. However, the story started with deep conviction in a market and some serendipity.

When we met Archit Lohokare and Kash Ivaturi in the summer of 2025, it felt meant to be. For months, we had been thinking about the area of agent identity and access, so much so that we were considering incubating a business in this space.

In our view, the majority of the AI security startups at that point were focused on the obvious, low-hanging fruit issues of using ChatGPT and Claude. These included making sure sensitive data did not end up being fed into an LLM, or having a customer-facing chatbot go off the rails and make a bad business decision. These problems seemed like just the beginning – a wave of enterprise-grade problems was still to come. Chief among them were the issues of governance, access, and privilege. What does an AI agent access, and when? How should companies treat agents within their identity and governance frameworks? Who has permission to use what agents, and in what contexts? All of these problems were not getting enough attention, even though they represent the critical questions underpinning identity in the age of AI, a massive market that is up for the taking.

With this idea, and these open questions, we met Archit and Kash as they were beginning to form what would become Eos. The connection and alignment was instantaneous. Archit and Kash, having been leaders of identity and access management and governance products at CyberArk and Idaptive, had seen firsthand the disruption heading this market’s way because of agentic systems. Agents change the way we need to architect identity and governance programs within the enterprise. The traditional incumbents are not prepared for that disruption. This was the opportunity that led to Eos, and in the summer of 2025, we seeded the business and they were off to the races.

Today does not mark the end of that story, but a very exciting step change and acceleration of it. We are excited that Eos is being acquired by AppViewX, a leader in the Machine Identity Security, Certificate Lifecycle Management (CLM), PKI and Post-Quantum Cryptography (PQC) space. Archit and Kash will assume the CEO and CTO roles at the new combined company, and AppViewX will integrate Eos into its product offering, repositioning around a next generation AI Agent and Machine Identity Security platform.

We could not be more excited about this milestone. AppViewX provides Archit and Kash the platform and distribution resources to go after the Eos vision, but to do so bigger and faster than they could have otherwise. AppViewX’s Machine Identity Security and CLM products should be a natural component of the Eos offering, and make the technology even stronger. In AppViewX, we saw a partner that could help get Eos to market faster, and with more impact, in a space that is getting hotter by the second.

We could not be happier for Archit and Kash, two unbelievably seasoned professionals and operators in the identity security space. There are no better builders in this market than them, and the combined Eos-AppViewX entity will be a force to be reckoned with in this next wave of Identity Security companies built for the AI and Post-Quantum era. We’re excited to be along for the ride.

A special thanks goes to Doug Steinberg, an Operator-in-Residence with us who spearheaded much of the research and diligence that led to our interest and conviction in this market. Without his hard work, the Eos story would have been very different, and it almost definitely would not have included us at Primary.

Congratulations to Archit, Kash, and team Eos!

.avif)

Primary’s 2026 investment thesis for GTM Tech

Go-to-market is a space our team knows intimately well: many of us were previously buyers and users of these solutions, and Primary spends thousands of hours each year working with our portfolio companies on their sales, marketing and customer success rhythms. When we published our last GTM thesis, AI was just beginning to shake things up; today, that shakeup has accelerated dramatically. We have gained clarity on what truly matters for building a category-defining GTM company and are excited to hear your feedback.

GTM startups must fundamentally transform the company P&L

Our thesis: AI has finally made it possible to transform the GTM P&L, and the market is demanding companies act accordingly. Today’s best-in-class GTM solutions embrace these four market realities:

- Gross margin pressure requires companies to radically reduce operating expenses

The best companies are investing heavily in compute to stay competitive and eroding gross margin in the process. They simply must get more efficient with their operating expenses, but GTM financial metrics such as CAC payback and net magic number have been stagnant for years.

- AI-native companies are prompting an evolution to leaner org design

Growing revenue has historically been expensive and wildly inefficient due to the number of people required: human BDRs can only handle so many touches per week even with the newest tools, companies are forced to hire another middle manager for every X new AEs, and so forth. AI-native companies are achieving staggering productivity per employee, and are setting new expectations for what’s possible. Every company must reconsider the human intensity of their GTM motion. - Function-specific software silos are collapsing

It’s finally possible to break down the barriers across functional software categories (e.g. marketing software vs. sales software vs. customer software).

- Companies will collapse and integrate traditional GTM roles

We are on the brink of a new “full-funnel GTM” role, removing the traditional functional specialization between Marketing - BDR - Sales - SC - CS - AM. It’s conceivable to have one person – backed by powerful agents – cover pipeline generation, sales, and ongoing customer management.

We are excited to back founders building AI-native solutions to enable this exciting new world.

Introducing PRIME

We are looking for founders or aspiring founders building GTM businesses with a direct-line impact on the P&L.

We've codified this as having demonstrable impact on PRIME:

- Productivity (Revenue per Employee)

- Retention (NRR or GRR)

- Investment Efficiency (Net Magic Number / S&M Efficiency)

- Momentum (Top-Line Growth)

- Expense Reduction (Headcount and Cost Eliminations)

This is our non-negotiable filter for GTM investing. Companies who explain ROI through opportunity cost, marginal efficiency gains, or indirect metrics don't fit our mold.

Consider a category such as digital deal rooms: they may accelerate cycle times and marginally improve win rates, but there are multiple steps of math required to translate that to the P&L. Marginal gains are certainly valuable, but we are committed to backing the companies that seek to deliver an order of magnitude impact.

Founders: we thrive with serial entrepreneurs who are obsessed with their customers

We've learned that second-time founders are where we add the most value. Our strongest fit tends to be with serial entrepreneurs who value what we bring beyond a logo on the cap table: our network connectivity and operating experience. Exceptional talent is exceptional talent and we’re excited to meet any high-slope founder who shares our category conviction. But founders such as Amanda Kahlow at 1mind, Ganesh Ramakrishna at Lyric, Richard Harris at Black Crow AI and Jon Sherry at Alium exemplify the type of builders we're excited to partner with: they have unfinished business.

The founders we're drawn to are aggressive about their own GTM and deeply customer-obsessed. They understand you cannot build enduring enterprise value without enduring customer value, and they're constantly challenging their own assumptions based on what they learn in the market. They obsess over the risk of the gross retention apocalypse. At the same time, they're Challenger sellers—teaching the market where it should be headed. Importantly though, the founding team must marry this customer obsession with world-class technical acumen.

The path to full-stack GTM

We love hearing a founder’s articulation for how they will bring this new GTM operating system to life. We’ve thought extensively about wedge vs. compound startup tradeoffs and are excited to share our own thoughts:

Wedge Strategy

The wedge approach starts narrow but has a strategic vision to become a platform. Wedge products must still meet PRIME criteria and demonstrate clear P&L impact because that guarantees the right to ultimate share-of-wallet expansion. But beyond financial impact, wedge products need two additional qualities:

- Obvious right to win – whether it’s a “Zero CAC” founder with a rolodex of prospects who already trust them, a team of 10x engineers shipping products at unprecedented speed or a former operator with an earned secret, the companies we back must have a clear right to win the lane of their wedge.

- Own the context – the best wedges embed into the bloodstream of workflows and data, earning the right to capture net-new signals or refine existing data into decision-grade context. If a wedge cannot maintain and resolve context, it will struggle with durability regardless of the initial P&L impact. Playing the context angle correctly also makes launching subsequent SKUs more seamless and more defensible.

1mind is a great example of a successful, context-rich wedge approach. When we led the Seed round, we were investing in Amanda’s vision to build an end-to-end AI brain for all GTM functions from first prospect engagement through ongoing support, but we recognized they needed to start with a wedge. 1mind carefully selected an inbound AI BDR wedge that facilitated thousands of customer conversations, enabling impressive context. The data ingestion needed to train their AI “Superhumans” combined with that conversational context positioned them to expand into adjacent workflows with an unfair advantage. 1mind’s right to win was Amanda’s track record: she had previously built a category-defining unicorn with 6sense, and had an incredible rolodex of prospects ready to take her at her word.

1mind also exemplifies PRIME: it makes sellers more efficient by having AI handle upfront conversations so reps can manage bigger deal loads (Productivity), increases efficiency through reduced hiring needs, thereby reducing CAC and increasing net magic number (Investment Efficiency), and drives obvious expense reduction because teams can hire fewer people (Expense Reduction).

"Wider" / Compound Strategy

The compound approach goes full-stack from the onset. The core question: why are customers trusting you for this risky, transformative move? Because these plays command higher switching costs, we believe founder domain fluency is essential.

We recognize compound businesses take longer to build, particularly in the 0-1 GTM phase. During that extended building cycle, we're looking for a best-in-class approach to design and development partners as well as evidence of off-the-charts engineering and roadmap velocity. We love it when we hear comments along the lines of, “I can’t even keep up with our engineering team because we’re shipping so fast” (a recent verbatim from Amanda at 1mind!).

If you’re transforming the GTM P&L, get in touch

The GTM category is at an inflection point. AI has created both tremendous opportunity and tremendous noise. The winners will be the companies that don't just bolt on AI capabilities to existing workflows, but fundamentally reimagine how companies acquire, retain, and grow their customer base.

We're committed to finding category-defining plays early and supporting them relentlessly. If you're building something that transforms the GTM P&L, we want to hear from you.

.avif)

Investing in the reinvention of cybersecurity

We are at a crucible moment in cybersecurity, one in which AI is changing everything. Just last week, Anthropic caught a near-successful cyber espionage attack that was 80%-90% executed by AI agents. These AI agents ran many parallelized attacks at thousands of requests per second, the kind of speed and sophistication a human would only dream of. The world is getting scarier, and CISOs wake up every day confronting this reality.

At the same time, AI is a new technology platform that presents internal risks. Top-down mandates from C-suites and boards mean that AI experimentation is ubiquitous, creating new threat vectors. Additionally, new hardware and configurations in the data center mean that the physical infrastructure to protect is different too, necessitating new solutions.

This change is scary, but also exciting. For too long, cyber has been bogged down in the SPM craze—“posture management” and dashboards. Of course, visibility is necessary, but the dashboard sprawl has become comical. A CISO’s main problem is no longer blindspots, but rather an excess of data. Alert after alert, and security teams seemingly get farther from actual solutions. In the words of a CISO I know well, many tools are just “more software for software.”

The evolution of the market in this way makes sense. Some visibility-focused startups have become category-defining, generational companies. In pursuit of the next Wiz, VCs have over-funded visibility. Despite some successes, most of these companies have contributed to the most fragmented, sprawling, and redundant stack in the history of cybersecurity.

AI has the potential to break this pattern by automating workflows, reducing costs, and finding better signals in the noise. We see this happening across all security categories, from identity to data to endpoint, and have made investments accordingly. The time to build in cyber is now—to give security leaders less, not more; to think of cyber from a blank slate with AI at the center; to use the data the SPMs of the last decade have generated to deliver unique value.

And New York is a great place to build. Although Primary is much bigger than we once were and we now invest globally, the firm began with a bet on New York. A bet that New York is the greatest city in the world, with the highest density of customers and talent, and that founders should be here. This is true for cyber. New York is home to the largest Israeli community outside of Israel, where a disproportionate number of cyber companies are born. And, as the center of the financial world, New York is home to some of the biggest, most sophisticated cyber customers in the world.

We are seeing the gravity around New York strengthen, as companies like Wiz, Cyera, and Axonius have all relocated their headquarters here. Today, there are thousands of security operators in this city, and that number is growing. Undoubtedly, many of these operators will start great companies of their own. We believe we are on the verge of an explosion of the New York cyber ecosystem.

Today, I am humbled that my promotion to Partner is being announced to the outside world. Primary is a deeply special organization for me. I first met the team when I was 25, before going to business school, having spent my prior years working on a startup in NYC. As a born and bred New Yorker who knew how hard the earliest days of startup building were, Primary stood out. “Startups are hard; founders deserve better” has been and is always at the core of what we do, and our ability to execute on that truth and deliver value for founders has grown exponentially since I joined the firm.

I am especially excited about leading the cyber practice for Primary, at a time when security is so existential and great upstarts so needed, and in a place that is poised to become a hotbed for cyber activity. We are working on a lot of interesting things here, and have big dreams. We do not invest in many companies—I will make maybe two investments a year—but the companies we do invest in will be big swings, and we will try to help them in a differentiated way unmatched by any seed firm on the planet.

The challenge of building a cyber seed investing practice is real, but we believe our strategy and positioning is unique. If you are reading this, and intrigued to learn more about our approach, please get in touch. Today I am kicking off a search for an associate to help me with these efforts. This person will be my partner-in-crime, and together, we will define the strategy and investment decisions of the cyber practice at Primary.

The reinvention of cyber in an AI age starts with startups, and amazing customers, advisers, and investors who help them on their journey. If you want to be a small part of this transformation, let’s talk.

.avif)

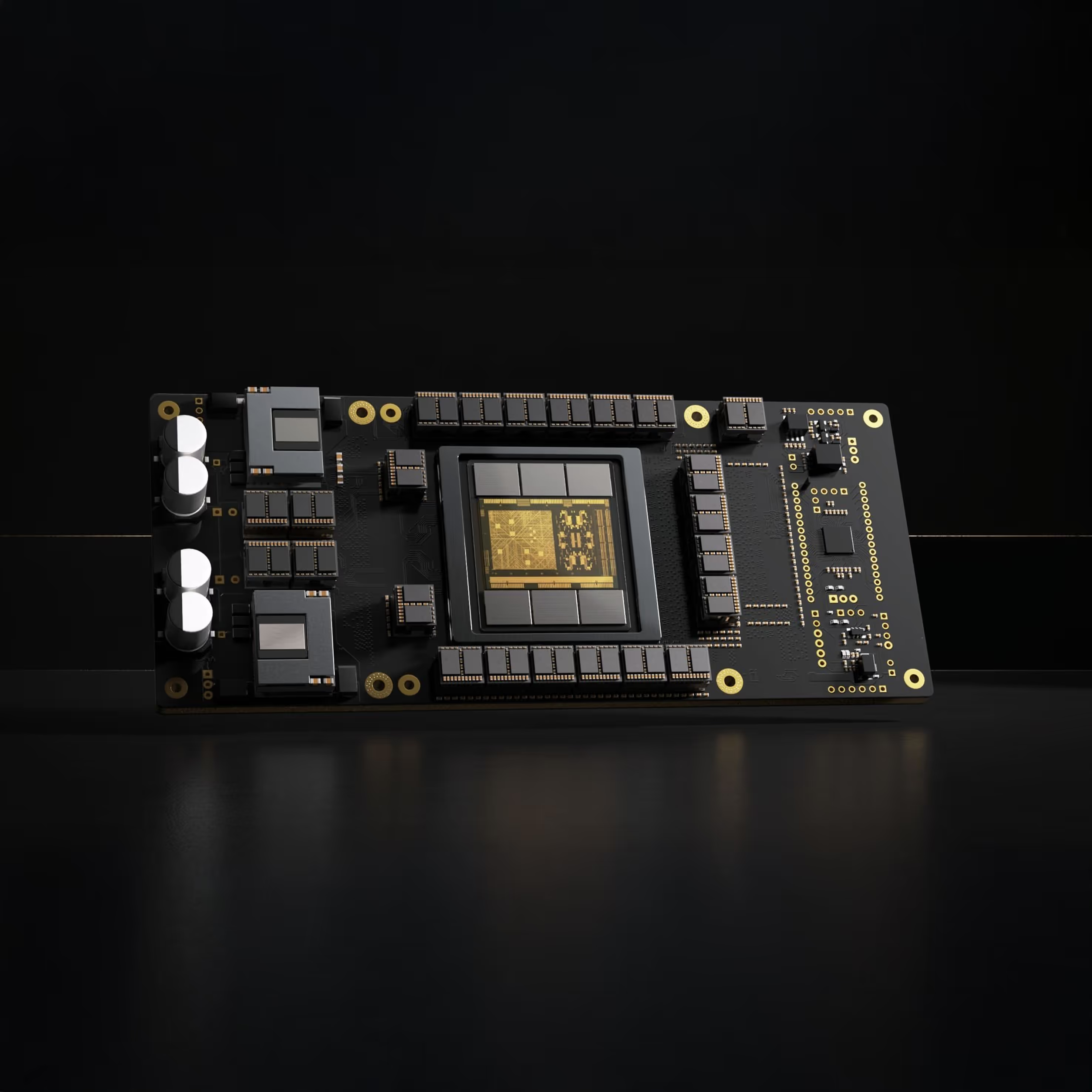

The Biological Computing Company raises $25M Seed

The Biological Computing Company (TBC) is ready to tell the world what they’re building. Founders Alex Ksendzovsky, CEO, and Jon Pomeraniec, COO, have led the company to use living neural networks to develop and optimize AI architectures in a commercial setting. This investment pushed the boundaries of our compute thesis for three reasons: one, Alex and Jon live in that founder sweet spot of brilliant, on a mission, and wildly different; two, their tangible results are the stuff of sci-fi but very real; and three, it’s exactly what we’re looking for — the belief that radical breakthroughs in compute are needed to meet AI’s demand for efficiency, performance, and scalability and that outlier founders with perspectives born of unique life experiences will build that future.

To understand TBC from first principles, we start with the fact that the brain is a million times more energy efficient than the best silicon today. It operates on roughly 20 watts of power to deliver an exaflop of computational power. You’d need 120K watts of power for NVIDIA’s Blackwell to deliver the same throughput.

For decades, neuroscientists have tried to translate the brain's functionality into digital systems. What if, instead of translating the brain, we let neurons compute on their own? If we can harness the computing power nature intrinsically provides us, we can unlock capabilities that silicon alone cannot.

These facts have been driving Alex and Jon for two decades. In the early 2000s, Alex was studying philosophy of mind in college and saw a visiting professor demo a robot powered by a neuron. He immediately intuited the potential to extract the immense power of the brain for computation. Since then, Alex and cofounder Jon – both neurosurgeons from UMD and Penn – have had a decades-long partnership through medical school, surgery roles, and research positions. After making rapid progress in their research around the brain’s computing capabilities in 2021, as covered by Fortune’s Term Sheet, they started The Biological Computing Company to focus on building computers for the future – out of neurons. This is not neuromorphic or “brain-inspired” chip design. This is harnessing the intrinsic and evolutionary power of the brain for compute.

The Biological Computing Company is building an organic computing platform that connects real living neurons with modern AI, making frontier models more stable, scalable and dramatically more efficient. TBC is able to harness intelligence, connecting neurons to electrodes, which then demonstrate superior efficiency to silicon for specific computational tasks. With its first product, TBC is showing a 23x retained improvement in video model efficiency – their team encodes real world data into living neurons, then decodes the neural activity into richer representations that have been mapped to state-of-the-art AI.

TBC is also using biological compute to extract principles from living neural networks, informing the development of novel AI architectures. In doing so, the company is not only augmenting today’s models, but shaping how future models will be built. This marks the first true commercialization of biological computing – and a critical step toward a world where biological and digital compute operate together.

We’re proud to have led The Biological Computing Company’s $25M Seed round, joined by Builders VC, Refactor Capital, Wonder Ventures, E1 Ventures, Proximity and Tusk Ventures. This is entirely new technology and we don’t know exactly how the market around it will develop. We believe in founders shaping the arc of history by doing something that people previously thought to be impossible. We look forward to supporting them in their journey. If you are interested in building at this frontier, TBC is hiring.

Etched’s Series A to revolutionize AI hardware with purpose-built LLM chips

"Move fast. Speed is one of your main advantages over large competitors."

This quote from Sam Altman encouraged us to venture into the daunting battlefield that is the semiconductor industry and lead Etched’s seed round about 15 months ago.

And it is speed of execution that gives us confidence in the future of superintelligence that Etched will enable. Indeed, we believe they will set a world record for fastest time to tape out for such a complex chip. This is fitting for a company building a chip that will be orders of magnitude faster than NVIDIA’s latest GPU, when running inference on transformer models.

Today, Etched is announcing a $120 million Series A to bring the same vision they pitched over a year ago to reality.

Hardware, not software, is the biggest bottleneck to truly magical AI experiences: artistic masterpieces like the next Titanic or Beethoven’s 9th produced by AI; agents that perform tasks on the web at the speed of thought, planning and booking honeymoons and preparing memos. What is impossible today will be possible tomorrow, but only with better hardware. To understand the Etched thesis, we encourage you to check out a post from the team at Etched discussing their bet.

The Etched team epitomizes the greatness of Silicon Valley, right down to the 'silicon.'

The CEO, Gavin Uberti, is brilliant, mature beyond his years, visionary, committed to the craft of being a founder, obsessed with the details, and insanely passionate. We are believers in him, and think that what Sam Altman is to software in AI, Gavin will be to hardware. You can listen to his podcast on Invest Like the Best with Patrick O’Shaughnessy, whose firm Positive Sum is co-leading this round with us, here. Gavin is joined by his cofounders, Robert Wachen and Chris Zhu, both equally remarkable in their own rights. This year, they became one of the first teams to collectively receive the Thiel Fellowship.

The founders are joined by a powerhouse roster of semis professionals who are all driven to break the rules while leveraging their expertise. Mark Ross, the CTO, was previously the CTO at Cypress Semiconductor, which eventually sold in 2019 for almost $10 billion. Ajat Hukkoo, the VP of Hardware Engineering, was at Intel for nine years and Broadcom for 14 before joining Etched. Saptadeep Pal, the Chief Architect, cofounded Auradine. The bench at Etched goes deep and every single person on the team is driven by an ethos of speed.

Most silicon teams are composed of people from the same networks and companies. Etched is the opposite of that: people with different skills and perspectives who want to be part of something special and change the world. It’s a team that embodies the creativity and optimism that makes startups exciting—a group of engineers, coming together with intense, uncanny ambition to build something that industry insiders believe is impossible. This is the only way that radical progress ever happens. This is how we get to superintelligence.

Today, more than anything, we’re proud of the team at Etched and humbled to be a part of their journey.

We’re also excited to be collaborating with such an excellent group of investors, including not just Positive Sum, but also Hummingbird, Two Sigma, Skybox Data Centers, Fundomo, and Oceans as well as angels like Peter Thiel, Thomas Dohmke, Amjad Masad, Jason Warner, and Kyle Vogt.

Interested in joining this stellar team? Check out the more than a dozen open roles here and help build the future of superintelligence.

.avif)

Why we doubled down on Inspiren

Senior living communities are at the heart of one of the most important demographic shifts of our time. An aging population, rising care costs, and persistent staffing shortages are forcing operators to do more with less—without compromising safety, dignity, or quality of life for residents. Yet most of the tools available today are point solutions: basic fall detectors, clunky emergency call buttons, or fragmented EHR systems that fail to keep pace with day-to-day realities.

Inspiren is changing that.

Their AI-powered hardware and software integrates real-time motion awareness, staff coordination, emergency response, and resident engagement into one cohesive ecosystem. From preventing falls to accelerating emergency response to improving care planning, Inspiren is redefining how senior living communities operate—while delivering measurable ROI to operators.

From Fall Prevention to Full-Stack Care Coordination

When we led Inspiren’s Seed round, the company had already built a best-in-class fall detection device. But CEO, Alex Hejnosz, and founder, Michael Wang, were thinking much bigger. They envisioned a full “intelligent ecosystem” for senior living, one that combined multiple hardware devices with a single software brain to give operators unprecedented visibility and control.

That vision is now a reality. In the past year, Inspiren has expanded from its core device to a comprehensive hardware and software product suite:

- AUGi for in-room activity sensing and fall detection

- Sense for high-risk bathroom coverage

- Staff Beacon for staff location and workflow optimization

- Help Button for residents to request help without wearing a pendant

- Inspiren HQ and Inspiren Mobile App, an AI software suite to give operators and staff real-time insights into the overall health of their communities

This unified approach does more than replace outdated systems—it collapses the need for multiple vendors into one platform, eliminating integration headaches and increasing staff efficiency. Dedicated clinicians partner closely with communities, ensuring clinical insights are communicated and applied in ways that maximize care quality, outcomes, and staff support. And, most importantly, keeps residents safer.

Why Now

The timing for Inspiren couldn’t be better. Over 10,000 people turn 65 every day in the United States. The senior living market is fragmented, underserved, and under increasing operational pressure. Regulatory scrutiny around resident safety is increasing, while financial risk from early move-outs and under-documented care is pushing operators to modernize.

At the same time, Inspiren’s combination of AI-powered sensing and integrated software is hitting an inflection point in cost and capability:

- Affordable hardware means mass deployment is now possible.

- AI-powered embedded workflows make adoption easy and ROI immediate.

- Regulatory and operational tailwinds are pushing toward data-driven, documented care.

In a market where speed of deployment is critical, Inspiren’s ability to get devices “on walls” faster than anyone else is a decisive advantage.

Why We Backed Inspiren—Again

We’re doubling down in Inspiren’s $100M Series B led by Insight Partners because we believe they are building the category-defining operating system for senior living. Our conviction comes down to four key beliefs:

- The market is ripe for a platform that replaces fragmented point solutions with an integrated, AI-driven ecosystem.

- Inspiren has proven product-market fit with industry-leading retention, rapid ACV expansion, and clear ROI for operators.

- The economics work at scale, with a recurring software model, short payback periods, and a massive market opportunity.

- This team can execute, having consistently delivered growth and product velocity ahead of plan.

As the aging population grows and care demands intensify, senior living communities will need more than incremental tools. They’ll need a system that makes care safer, faster, and more efficient. We believe Inspiren is poised as the market leader in this space.

We’re proud to continue our partnership with Alex, Mike, and the entire Inspiren team. The future of senior living is intelligent, connected, and compassionate — and Inspiren leading that future.

.avif)

AI-powered design review catching errors pre-construction

Bad design is one of the most persistent, overlooked, and expensive problems in construction. Change orders caused by coordination issues, missed specs, or incompatible designs aren’t just costly—they’re preventable.

In the U.S., hundreds of billions of dollars are spent on construction every year. Projects are chronically delayed and over budget, and with interest rates where they are, new development has become even more difficult in many markets. The majority of change orders could be caught in the pre-construction phase, but today’s review process is slow, expensive, and imperfect.

Large developers often outsource drawing reviews to third-party firms, paying hundreds of thousands of dollars for reports that take 6–20 weeks to deliver—and still contain errors. These delays and oversights ripple through every stakeholder, from architects to subcontractors, costing valuable time and eroding margins.

LightTable solves this. By applying AI-powered coordination and peer review, they can eliminate up to two-thirds, improving project IRRs by 3–4 points. For real estate owners, this means delivering projects faster, on budget, and with fewer costly surprises. For architects and subcontractors, it means less time revisiting old projects to fix preventable mistakes.

The Right Wedge: Peer Review

We backed LightTable in 2024 based on a simple premise: peer review today is manual, expensive, and error-prone—and AI can do it better. Starting with coordination issues across disciplines creates a powerful wedge to expand into broader design optimization and collaboration.

Three things gave us conviction:

- Acute pain: Developers feel the cost and time impact most directly.

- Adoption leverage: They can push better tooling across architects and engineers.

- Workflow ownership: Controlling the design review process opens the door to downstream automation, from value engineering to inventory-aware specs.

Since funding in November, LightTable has signed product development and design partnerships or pilots with five of the top ten developers in the U.S., including Hines, The Related Group, Greystar, Mill Creek, and Alliance. These pilots are already driving real-world feedback and product iteration, setting the stage for enterprise contracts with annual values that could reach seven figures.

Built With—and For—the Industry’s Best

LightTable’s go-to-market strategy is rooted in deep collaboration. Through these design partnerships, the team is running real projects and building in lockstep with enterprise users.

Paul Zeckser, Dan Becker, and Ben Waters didn’t just set out to build better design tools—they’re rethinking how buildings get designed from the ground up.

Paul, a product-first leader with sharp commercial instincts, spent over a decade shaping HomeAdvisor before leading product at Sealed. He’s known for turning big product visions into real market wins.

Dan, a seasoned AI and simulation engineer, founded a startup that was acquired by DataRobot and helped enterprise teams adopt AI. Frustrated by how slowly legacy players like Autodesk move, he decided to build LightTable: an AI-native company already shipping faster than most incumbents can prototype.

Ben, a trained architect that has worked at SOM and Gensler, knows and has lived the pain of coordinating large drawing sets, only to see RFIs and Change Orders pile up. He was convinced AI could transform this archaic practice of human-only review and deliver massive ROI for builders.

Together, they’re the team with the perfect set of backgrounds to build for speed—and for a smarter, more intuitive future in design.

Why We’re Excited

LightTable is starting where the pain is most acute—pre-construction peer review—and building a platform that could become the coordination layer for the entire industry. The combination of early traction with top developers, a pragmatic and valuable product, and a sharp, fast-moving team makes this exactly the kind of founder-led business we want to partner with.

We’re proud to be backing Paul, Dan, Ben,and the team as they redefine how better buildings get built, long before anyone breaks ground.

.avif)

The gas turbine bottleneck reshaping energy infrastructure

Electricity costs are rising fast. One midstream energy CFO in Texas told us his company’s rates have climbed from $0.35/MWh in 2021 to nearly $0.70MWh today. “This is getting ridiculous,” he said. “We used to say the price at the pump decides elections. Going forward, it’s going to be the price at the meter.”

Similarly, an executive responsible for the data center buildout at Microsoft asks, “If every producible watt is already committed for the next five years, where can I get more watts?”

That question has become the defining obsession of this moment. AI companies, hyperscalers, and industrial manufacturers are all competing for the same scarce resource: firm, dispatchable electricity. Yet, turbines, the single most important piece of equipment required to make that power, are suddenly the most scarce.

There are effectively only three relevant manufacturers that can produce large-scale gas turbines: GE Vernova ($162B market cap), Siemens Energy ($91B), and Mitsubishi Heavy Industries ($84B). These machines sit at the center of the modern power plant. They are the hardware that converts fuel into motion and motion into electrons. Today, they are booked solid for years. GE, Siemens, and Mitsubishi are each reporting order backlogs that stretch roughly five years, with many delivery slots already sold into the next decade.

Rumors are circulating in Washington about just how acute this has become. A contact close to the Department of Energy’s new venture fund (a kind of “In-Q-Tel for energy”) told us the administration is even considering whether to pressure allies with outstanding GE turbine orders to release them back to U.S. buyers. It sounds extreme, but that’s the level of urgency building around this bottleneck.

What a Gas Turbine Actually Is

A gas turbine is, at its core, an air compressor, a combustion chamber, and a spinning shaft. Air is compressed, mixed with fuel (usually natural gas) and ignited. The expanding gases turn the turbine blades, which drive a generator to produce electricity. In a simple-cycle setup, that’s the whole process. In a combined-cycle plant, the hot exhaust is captured to make steam and power a second turbine, pushing overall efficiency and reducing emissions.

These are enormous machines. A single heavy-duty turbine can weigh more than 400 tons, stretch nearly 50 feet long, and generate anywhere from 100 to 400 megawatts, enough to power a mid-sized city. Smaller aeroderivative models, adapted from jet engines, are used for distributed or peaking power. Each large turbine costs roughly $50-100M depending on its class and configuration. It is elegant, brutally engineered machinery, and the workhorse of global baseload power.

How We Got Here

The roots of the shortage go back two decades. In the early 2000s, gas plants were being built everywhere. Then the 2008 crash hit, followed by another glut in the mid-2010s. Orders evaporated. OEMs shut factories, laid off skilled labor, and merged their supplier bases. The “painful overbuild” became a corporate cautionary tale as the turbine market crashed in 2018.

By the late 2010s, renewables dominated investor attention. Gas turbine divisions were treated as cash cows, not growth engines. Capacity stayed flat even as demand for electricity quietly began to climb again. Then came AI.

Starting in 2023, data-center power requirements exploded. Hyperscalers that once drew tens of megawatts suddenly needed hundreds. Natural gas looked like the fastest, most practical bridge to new capacity, but the world only had three major manufacturers left, and none had invested to scale. With the fresh memory of 2018 and some uncertainty about how much energy the AI buildout would actually require, they weren’t rushing to ramp capacity again.

Siemens Energy now reports a €136 billion backlog, the largest in its history. GE Vernova has roughly 55 gigawatts of gas orders in its queue and plans to expand from about fifty to eighty heavy-duty units a year by 2026. Mitsubishi Power says it will double production, but is already sold out into 2028. Even if all three deliver on their expansion plans, total output might rise only twenty to twenty-five percent—nowhere near enough to meet demand.

Anatomy of a Shortage